The Pentagon finally signed the check. Palantir is now the "core" of the U.S. military’s AI strategy. The press releases read like a victory lap for Silicon Valley, promising a world where data-driven decisions replace the fog of war. They want you to believe this is the moment the American war machine finally turns into a sleek, algorithmic predator.

They’re wrong.

The "core system" memo isn't a sign of progress. It is a massive, expensive admission of failure. By standardizing on a single, proprietary stack for military intelligence, the Department of Defense (DoD) hasn't modernized; it has centralized its own obsolescence. We aren't building a smarter military. We are building a digital Maginot Line—an expensive, rigid defense system designed for a type of war that will never happen again.

The Myth of the "Single Pane of Glass"

The central argument for the Palantir adoption is the dream of the "single pane of glass." General officers want a dashboard. They want to see every tank, every drone, and every supply line on one screen, updated in real-time. It sounds logical. It sounds efficient.

In reality, it is a strategic disaster.

Centralization is the enemy of resilience. When you funnel all battlefield data through a single proprietary architecture, you create a gold-mine target for adversarial electronic warfare. If an opponent like China or a sophisticated non-state actor can poison the data stream or find a zero-day in the core OS, the entire U.S. military decision-making process doesn't just slow down—it breaks.

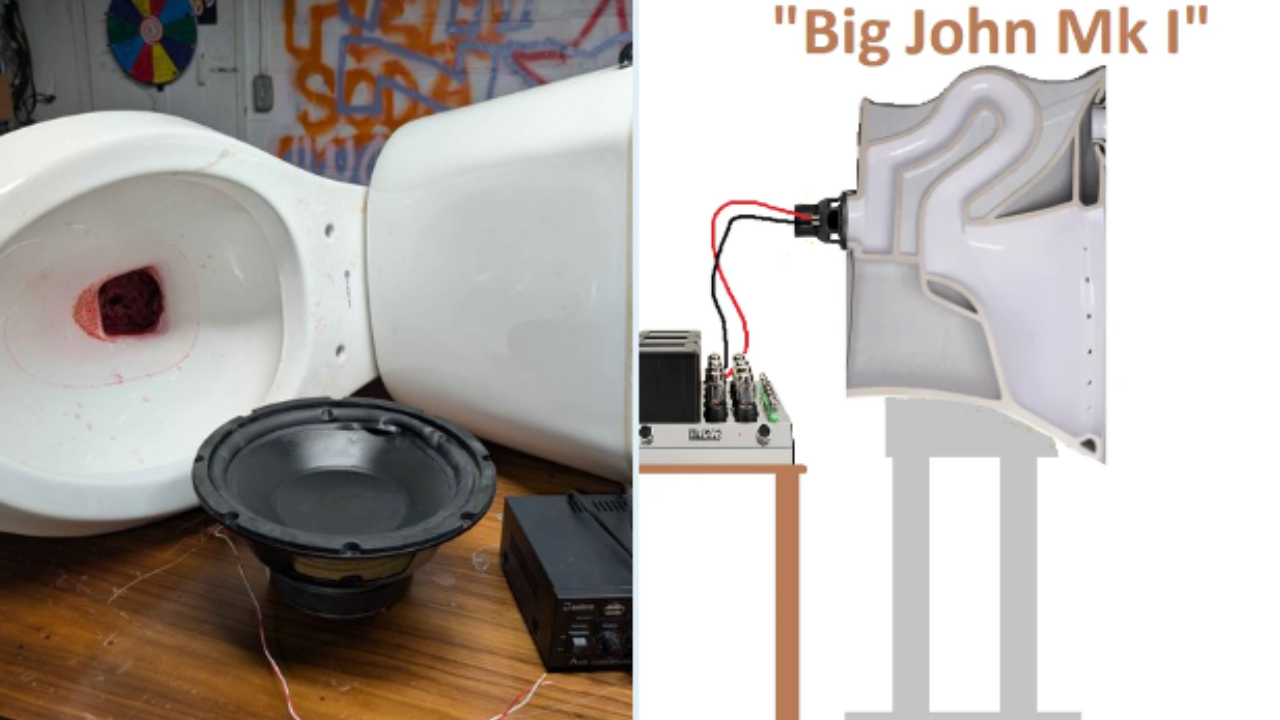

I have seen legacy defense contractors fleece the taxpayer for decades, but this is different. This isn't just about overpaying for a toilet seat; it’s about architecting a system that forces every branch of the military to speak one "language" owned by a single private entity. That isn't interoperability. That is a monopoly on truth.

Why "Predictive" is Just a Fancy Word for "Biased"

The memo touts AI’s ability to predict enemy movements. This is where the logic falls off a cliff. AI, by its very nature, is a look backward. It identifies patterns in historical data. It assumes the future will look like a slightly modified version of the past.

Modern warfare is defined by the "Black Swan"—the unexpected tactical shift that defies historical patterns. Look at the proliferation of $500 FPV drones in Ukraine. No "core AI system" trained on 2010s insurgent data or Cold War doctrine predicted that cheap, off-the-shelf plastic toys would render $10 million tanks into scrap metal.

By the time the Palantir system is fully integrated across the DoD, the data it was trained on will be irrelevant. We are teaching a machine to win the last war while our adversaries are busy inventing the next one.

The Data Garbage Disposal

The DoD loves to talk about its "vast data lakes." Most of that data is garbage. It’s unformatted, contradictory, and often manually entered by exhausted privates who just want to go to sleep.

- Inconsistent Metadata: A tank in a Navy database is categorized differently than a tank in an Army log.

- Sensor Noise: Modern battlefields are flooded with electronic interference that produces "ghost" signals.

- Adversarial Injection: It takes very little effort to feed an AI "hallucination fodder."

When you pump this sewage into a high-powered AI, you don't get insights. You get high-velocity, high-confidence errors. We are automating the process of being wrong.

The Procurement Trap

The Pentagon didn't choose the best technology. They chose the most aggressive lobbyist. Palantir succeeded where Google’s Project Maven failed not because their code was superior, but because they understood the Washington bureaucracy. They realized that in the DoD, a closed ecosystem is a feature, not a bug.

Once a system becomes the "core," it is nearly impossible to rip out. This creates a "vendor lock-in" of epic proportions.

The Cost of Proprietary Secrecy

When the military builds its own software (or uses open-source standards), it owns the "math." When it buys a proprietary AI system, the math is a trade secret.

Imagine a scenario where a mid-level commander questions why the AI is recommending a specific strike. In a proprietary system, that commander can't audit the weights or the training data. They are told to "trust the platform." This creates a culture of intellectual laziness. Commanders stop thinking and start following the dashboard.

The False Promise of "Speed"

The competitor article argues that AI will allow the military to move at "machine speed." This is a fundamental misunderstanding of the OODA loop (Observe, Orient, Decide, Act).

Speed without orientation is just a faster way to crash.

- Observation: Sensors are easily fooled.

- Orientation: AI lacks context. It doesn't know the political nuance of a border skirmish versus an invasion.

- Decision: Humans are now "on the loop," but as the system gets faster, the human becomes a rubber stamp.

- Action: The "Action" phase is still limited by the physics of moving fuel, ammo, and people.

If the AI decides we need to strike in 30 seconds, but the logistics chain takes 30 hours to catch up, the "machine speed" of the software is useless. It’s like putting a Ferrari engine in a lawnmower.

What No One Wants to Admit: We Need Less "Core," More "Chaos"

The fix isn't a better AI. The fix is a move away from the "core system" philosophy entirely.

If we want to win, we need radical decentralization. Instead of one massive Palantir contract, the Pentagon should be funding 5,000 small, disposable software projects. We need "edge" AI that lives on a single drone and does one thing—like identifying a specific jammer—and then dies. We don't need a God-eye view; we need a swarm of eyes that don't need a central brain to function.

The current strategy assumes we can out-compute our enemies. But computing power is a commodity. Our enemies have access to the same GPUs and the same transformer architectures. The advantage won't come from having the "core" system; it will come from having the most adaptable one.

The Brutal Reality of AI in Combat

Let’s be honest about what "AI as a core system" actually means for the soldier on the ground. It means more screens. It means more cables. It means more things that require a satellite connection to a server in Virginia just to tell you if there’s a sniper in the building next door.

If the link goes down, the "core system" becomes a brick.

We are trading rugged, independent capability for a fragile, high-tech tether. We are betting the security of the next century on the idea that our "data" is a weapon. It isn't. Data is a liability. It’s something to be stolen, spoofed, and used against you.

The Pentagon isn't buying a revolution. It’s buying an expensive insurance policy from a Silicon Valley firm that knows how to speak the language of "innovation" while delivering the same old bureaucratic bloat.

Stop asking if Palantir is "good enough" for the military. Ask why the military is so terrified of a decentralized future that it’s willing to hand the keys to its entire infrastructure to a single company.

The memo says Palantir is the core. Common sense says the core is rotten.

Throw the dashboard in the trash and get back to teaching soldiers how to fight without a Wi-Fi signal.