The Department of Homeland Security doesn't usually broadcast its internal wish lists. But a massive data breach from a federal contractor just did the job for them. We aren't looking at some distant, sci-fi future anymore. The documents pulled from the shadows show a government obsessed with tracking movement, predicting behavior, and automating suspicion. It’s a messy, high-stakes pivot toward algorithmic policing that most Americans are completely unprepared for.

If you think this is just about "better security," you’re missing the point. The leaked materials describe a sprawling infrastructure designed to ingest billions of data points—from social media posts to travel records—and spit out risk scores. It’s the kind of thing that makes "Minority Report" look like a children’s book. We’re talking about a system where your digital footprint determines how much "friction" you encounter at a border or an airport, often without you ever knowing why.

The Secret Bidding War for Your Data

Federal agencies have a massive appetite for information, but they don't always have the tools to digest it. That’s where private tech firms come in. The hacked files highlight a lucrative ecosystem of contractors promising DHS the world. These companies sell "analytics platforms" that can supposedly identify "potential threats" by scanning public records and private databases.

One of the most concerning aspects of the leak involves the sheer scale of the data collection. It’s not just about suspected criminals. It's about everyone. The goal is total situational awareness. When a contractor pitches an AI tool to DHS, they aren't pitching a scalpel. They’re pitching a vacuum. They want every scrap of information they can get their hands on because more data means more training for their models.

This creates a dangerous feedback loop. The government pays for the tool, the tool needs data, so the government expands its surveillance to feed the machine. We’ve seen this play out with license plate readers and facial recognition software. The leaked documents show that DHS wants to take this to the next level by integrating these disparate systems into one "unified" view of the individual.

Why Your Social Media is Now a Threat Matrix

You might think your Instagram or X (formerly Twitter) is just for memes and complaining about your coffee. To the DHS, it’s a goldmine for "sentiment analysis" and "behavioral prediction." The leaked records detail experiments with AI models that scan social media to find "indicators of radicalization."

The problem? These algorithms are notoriously bad at nuance. They don't get sarcasm. They don't understand cultural context. If you post a joke about "blowing up" a group chat, an automated system might flag you as a high-risk individual. This isn't theoretical. The documents show that these tools are being tested and deployed right now, often with little to no oversight.

I’ve looked at how these systems work in practice. They often rely on "proxy variables." If the AI can't find a direct link to a crime, it looks for patterns that it thinks correlate with trouble. This could be who you follow, what hashtags you use, or even the language you speak. It’s a digital version of "stop and frisk," but it happens in the cloud and you can't talk your way out of it.

The Myth of Objective Algorithms

The biggest lie in the tech world is that math can't be biased. The DHS documents are littered with the word "objective," as if using an algorithm suddenly removes human prejudice from the equation. It doesn’t. It just hides the prejudice inside a black box.

When you train an AI on historical data, you're training it on every past mistake, every biased arrest, and every systemic inequality of the last fifty years. If the "old" way of policing targeted specific neighborhoods, the "new" AI will just tell the officers to go back to those same neighborhoods. The leaked files don't show much concern for this. Instead, they focus on "efficiency" and "speed."

The Accuracy Gap Nobody Mentions

- Facial recognition has much higher error rates for people with darker skin tones.

- Predictive policing often creates "loops" where police are sent to areas because they’ve made arrests there before, not because there's a new threat.

- Automated vetting systems can flag people based on names that are common in specific ethnic groups.

These aren't bugs; they're features of a system built on flawed data. The DHS leak shows that while the government is aware of these "accuracy challenges," the push to deploy is winning out over the need for fairness. They’d rather have a tool that’s 70% right and fast than a system that’s 100% fair and slow.

Your Biometrics as a Permanent Passport

We’ve all seen the facial recognition kiosks at the airport. They’re convenient, right? The leaked data suggests those kiosks are just the tip of the iceberg. DHS wants a "biometric exit" system that tracks everyone entering or leaving the country using face, iris, and fingerprint scans.

This isn't just about catching people with fake passports. It’s about creating a permanent, unchangeable ID for every human being that crosses a border. Unlike a password, you can’t change your face. If a government database gets hacked—ironic, considering where this info came from—your most personal data is out there forever.

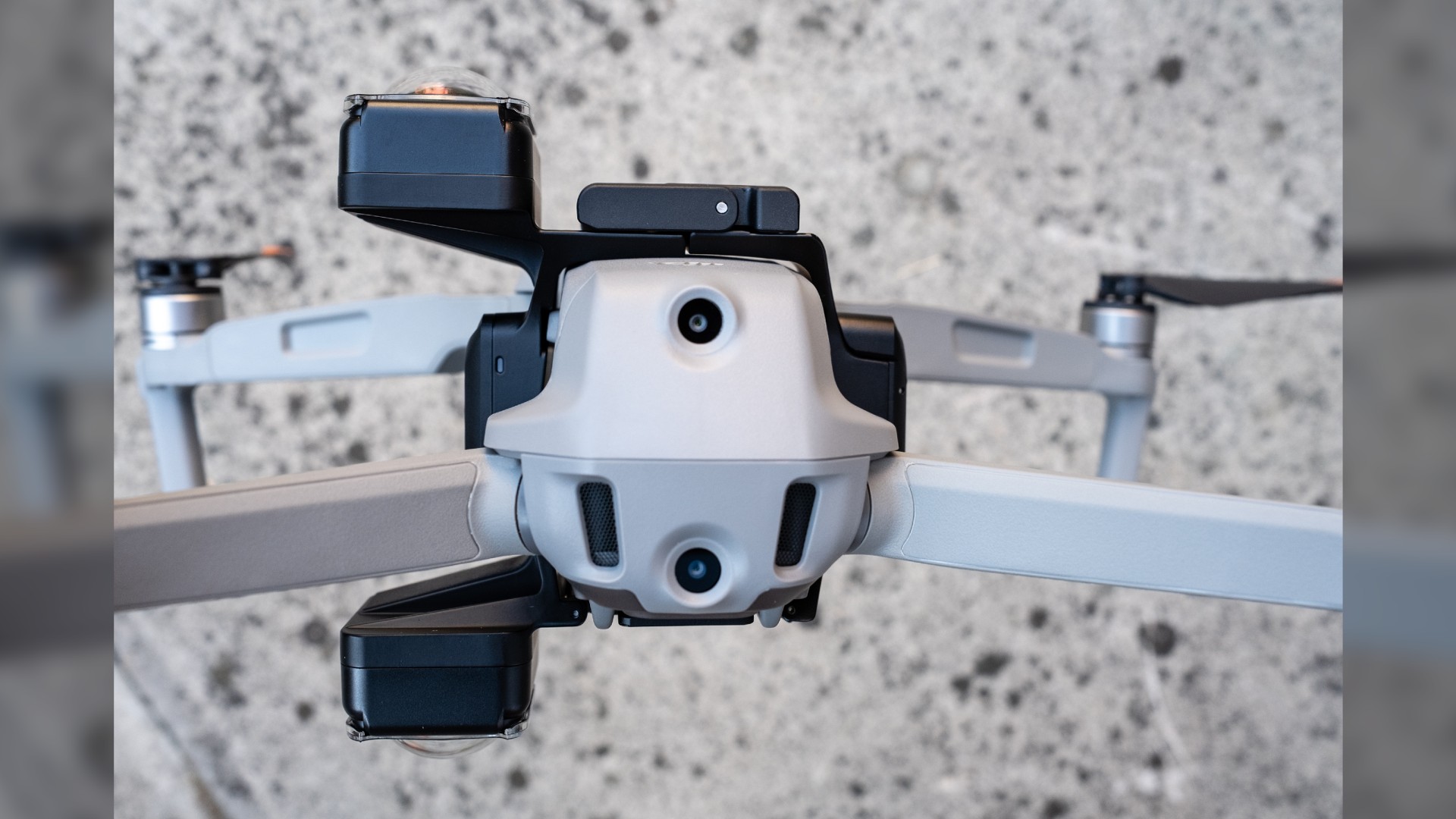

The leak shows that DHS is looking into "biometrics on the move." This means cameras that can identify you while you’re walking through a terminal or standing in a crowd, without you ever having to stop or look at a lens. It’s passive, it’s constant, and it’s being sold as a way to "improve the traveler experience." Don't buy it. It's about control.

The Contractor Loophole

Why does DHS use contractors for this? Because contractors can do things the government isn't allowed to do. If the government wants to track your location, they usually need a warrant. If a private company scrapes your location data from apps and sells it to the government, that’s just a "business transaction."

The hacked data lists several companies that act as middlemen. They buy data from weather apps, games, and social media sites, then package it into "intelligence products" for DHS. It’s a massive end-run around the Fourth Amendment. The leaked documents show that DHS is heavily reliant on these private data streams to build their surveillance mosaics.

This relationship is cozy. Too cozy. Former DHS officials often end up on the boards of these tech companies, and the companies spend millions lobbying for bigger surveillance budgets. It’s a self-sustaining machine that feeds on your privacy.

What You Can Actually Do

It’s easy to feel like privacy is dead and we should just give up. That’s exactly what they want you to think. But when data like this leaks, it gives us a window into the machine. We can see the cracks.

You need to be proactive about your digital footprint. This isn't about being "paranoid"; it's about being informed. Use encrypted messaging like Signal. Audit your app permissions—does that calculator app really need your location? More importantly, support organizations like the Electronic Frontier Foundation (EFF) or the ACLU. They’re the ones actually fighting these legal battles in court.

Demand transparency from your local representatives. Ask them if your local police department is sharing data with DHS through "fusion centers." These centers are the hubs where local, state, and federal data get mashed together. Most people don't even know they exist, but the leaked documents show they’re a critical part of the DHS surveillance strategy.

Stop treating your data like trash. It’s the most valuable thing you own in the 21st century. If the government is willing to pay billions to track it, it’s worth protecting. The "nothing to hide" argument is a trap. You might have nothing to hide today, but you have no idea what an algorithm will decide is "suspicious" tomorrow.

Check your privacy settings on every platform you use. Turn off "precise location" whenever possible. Use a VPN to mask your IP address. These are small steps, but they increase the "cost" of tracking you. The more people who do this, the harder it is for these automated systems to build a complete profile. Don't make it easy for them.